The Terminator is often people’s reference point for artificial intelligence (AI), especially when they worry that technology will be the end of civilization. However, on the other end of the AI spectrum is the beloved, marshmallow fluff Baymax, the helper robot providing assistance to those in his presence.

The reality of AI sits somewhere between these two extremes. For security teams, AI initially seemed like a revolutionary technology that would offer faster detection and automated analysis. However, the reality was that many security teams found themselves working with opaque models that offered no way to validate outputs.

To gain value from AI in cybersecurity, decision-makers need to understand the technology’s benefits and challenges so that they can appropriately evaluate cybersecurity solutions.

What Is AI in Cybersecurity?

AI in cybersecurity means applying artificial intelligence technologies to prevent, detect, and respond to cyber threats so that security teams can move beyond the limitations that come from traditional, rule-based systems. Algorithms can learn from data, identify patterns, and make predictions that streamline daily operations. Two core engines underlie these capabilities.

Machine Learning

These data analytics models learn from large security information datasets that include high volume telemetry like:

- Malicious and benign files.

- Network traffic.

- User behavior.

As the models learn to recognize threat characteristics, they can identify similar attack types in the future. Supervised AI models learn from labeled data to identify expected or “correct” outcomes. Unsupervised models learn from unlabeled data by discovering patterns, structures, or anomalies.

When working with unsupervised AI models, security teams should understand that sometimes these technologies lack context for differentiating between malicious activity and abnormal activity which can lead to false positives. Additionally, without understanding context, these models may consider behavior normal when it already includes hidden attacker activity, enabling evasion techniques for advanced persistent threats (APTs).

Deep Learning

Deep learning models are a more advanced version of ML for more complex and nuanced processing that use multi-layered neural networks. Neural networks pass data through various layers of connected nodes and adjust their weights to recognize patterns.

These models can analyze massive, complex data sets to detect subtle attack patterns that traditional ML might miss. However, security teams struggle to explain and trust these more opaque, resource-intensive detection, especially if they have no way to verify whether the model is trained appropriately or has a bias.

What Is the Difference between AI for Cybersecurity and AI Security?

Despite occasionally being used interchangeably, the two are distinct but related concepts.

AI for cybersecurity, sometimes called cyber AI, uses AI as a defensive tool, applying models to strengthen the organization’s security posture. By leveraging AI’s analytical power, security teams can automate threat detection, accelerate incident response, and predict potential threats.

AI security focuses on protecting the AI systems from attack. As organizations increasingly rely on AI models for various critical functions, adversaries target them.

What Are Some Key Uses Cases for AI in Cybersecurity?

According to research, global spending on AI and AI-enabled systems will exceed $500 billion annually by 2026, noting that security and risk management are some of the fastest growing categories. While many organizations have adopted some form of AI for business operations, they should consider the most effective use cases for security as they look for solutions.

Identity and Access Management (IAM)

By adopting AI for IAM, organizations can engage in dynamic risk assessments for real-time contextual analysis. User and Entity Behavior Analytics (UEBA) combine and correlate data across:

- User location: Tracking whether users login from the typical geographic area.

- Time of day: Identifying whether logins are during or outside of normal business hours.

- Resource accessed: Determining whether the user is accessing information that they should be accessing.

AI-powered systems can detect anomalous login attempts that suggest a compromised account and automatically trigger multi-factor authentication (MFA) or block access, creating a more adaptive and secure authentication process.

Endpoint Security and Management

Attackers target a wide variety of endpoints, including laptops, servers, and mobile devices. AI-driven Endpoint Detection and Response (EDR) solutions monitor endpoint activity continuously. AI solutions can move beyond known malware signatures to identify suspicious behaviors that indicate a potential breach, like unusual file modifications or network connections. These capabilities can help detect fileless malware or zero-day exploits that other tools might miss.

Cloud Security

Using AI, security teams can continuously scan for misconfigurations, compliance violations, and vulnerabilities across multi-cloud environments. AI can analyze large volumes of logs to detect threats specific to cloud services and provide risk-based prioritization for actionable recommendations.

Cyber Threat Detection

AI algorithms excel at analyzing immense volumes of data from firewalls, network devices, and security logs to identify subtle indicators of compromise. By establishing a baseline of normal network activity, AI can instantly flag deviations and anomalies that could signal an active threat.

Incident Investigation and Response

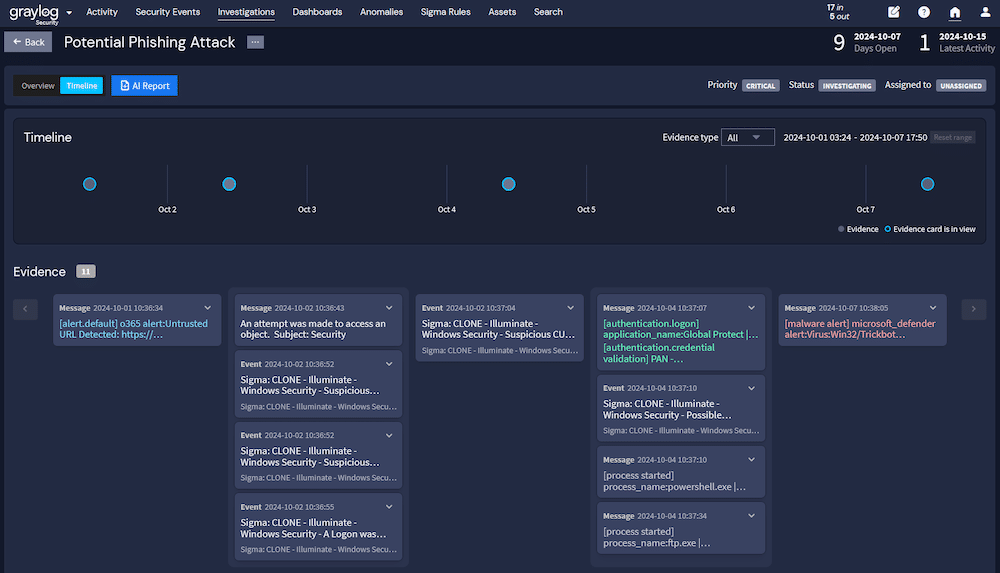

Security teams can use AI to automate an investigation’s initial stages. By correlating alerts from various security tools and enriching them with threat intelligence, AI can provide a consolidated attack timeline that reduces mean time to respond (MTTR).

Phishing Attacks and Social Engineering Prevention

As adversaries incorporate AI into their email attacks, security teams can combat risk with their own AI. AI-powered email security gateways can use natural language processing (NLP) to review email content for suspicious language, urgency, and tone as well as analyze links and attachments.

What Are the Challenges of Using AI for Cybersecurity?

Deploying AI in cybersecurity comes with various technical and operational challenges that organizations should consider before implementing the new technology.

Adversarial AI

Threat actors use AI to deploy their attacks. In November 2025, Google Threat Intelligence Group identified a novel AI-enabled malware in active operations. Malware families, like PROMPTFLUX and PROMPTSTEAL, use large language models (LLMs) to generate malicious scripts and evade malware detection tools, rather than coding the functionalities into the malware. While AI-enabled security tools offer earlier threat detection, security teams need to have insight into how the AI analyzes signals so they can determine whether it can respond appropriately to these new threats.

Data Quality and Bias

Any AI is only as good as the data fed into it. AI models in cybersecurity fail when training data is incomplete, biased, or outdated, causing them to miss real threats or overreact to normal behavior. Bias in data can also systematically skew detections, reinforcing blind spots and creating false confidence in flawed outcomes. For example, when AI model training focuses on enterprise network data, the AI detections can become biased toward the corporate environment which can fail to identify real attacks in cloud or operational technology environments.

The “Black Box” Problem

Many advanced AI models, particularly in deep learning, are “black boxes,” meaning users cannot easily interpret the decision-making processes. The opaque AI systems create operational challenges including:

- Decision validation: Without the context to validate the alert, analysts spend time trying to understand the reason the AI flagged a threat, swapping one alert-fatigue problem for another.

- Compliance documentation: Organizations need to provide documentation showing how the AI system reached a decision, including the data use and the process’s compliance posture.

- Data control: Organizations need to ensure that they maintain control and ownership of security data which becomes a problem when vendor-locked AI requires sending logs, telemetry, or operational data to a third-party infrastructure.

High Cost and Complexity

Implementing and maintaining a sophisticated AI requires significant investments in staffing, technology, and infrastructure. Most organizations have to rely on a third-party vendor and its “black box” AI because building homegrown systems come with costs beyond initial deployment. As the threat landscape evolves, the organization must constantly retrain and integrate models, increasing operational costs.

6 Best Practices for Evaluating an AI Solution for Cybersecurity

AI in cybersecurity has the potential to change how security analysts do their jobs and protect systems more effectively. However, evaluating an AI solution for cybersecurity means understanding how the model works and how the vendor implements it.

Require Explainability in AI Decisions

AI-driven solutions should provide clear reasoning behind each alert or action, allowing security analysts to understand why the system flagged a potential threat. Explainability builds trust in automated systems, reduces false positives, and prevents teams from blindly following opaque recommendations. When an AI solution can articulate its logic, teams can validate, adjust, and respond faster, for more reliable threat detection and analyst confidence.

Link Insights Back to Real Data

Effective AI connects findings to concrete logs, metrics, or event records rather than outputting abstract scores. When teams can trace alerts back to the source data, they gain confidence in and can more rapidly verify investigation results. Linking insights to actual evidence also enables analysts to identify patterns, spot anomalies, and take informed actions without relying solely on AI interpretations.

Prioritize Alerts Based on Risk

Not all alerts are created equal. AI should rank anomalies based on severity, asset criticality, and behavioral patterns, so analysts can focus on the most meaningful threats first. By emphasizing risk over volume, teams can reduce alert fatigue, improve response times, and allocate resources efficiently.

Keep People Involved

Technology should empower people rather than taking over for them. Analysts should be able to easily validate AI findings, tune workflows, and decide on escalation steps. Human oversight ensures that AI augments decision-making rather than replacing judgement. By balancing speed and accuracy, organizations can maintain accountability while adapting to the dynamic threat landscape.

Start Small and Test Use Cases

AI adoption should begin with narrow, well-defined scenarios. By testing models in specific workflows or threat types, teams can validate effectiveness, refine parameters, and ensure reliable performance. After this initial evaluation, they can scale to broader environments. Starting small reduces risk, avoids overhype, and builds a foundation for more confident, strategic deployment as organizations expand their AI capabilities.

Validate Against Historical and Live Data

Security teams should be able to validate AI models against historical logs and real-time events to ensure detection accuracy. Historical data helps uncover patterns and reduce false negatives, while live data tests adaptability to evolving threats. Combining both ensures that models remain robust, actionable, and capable of supporting security operations in dynamic and complex environments.

Graylog: Explainable AI in Cybersecurity

Graylog empowers organizations to adopt AI that enhances threat detection while remaining transparent, interpretable, and actionable. Whether integrated into existing workflows, SIEM platforms, or standalone security analytics, explainable AI delivers consistent insights, anomaly detection, and context-rich alerts.

Teams seeking clarity and auditability can leverage models that provide detailed reasoning for each alert, while those prioritizing efficiency benefit from automated prioritization and risk scoring. For security operations balancing speed and oversight, explainable AI enables analysts to make informed decisions confidently. With a Graylog flexible approach, organizations gain the visibility and control needed to evolve their AI-driven security strategy as threats and environments change.