For as long as I can remember, I have started my day off by reading various security news sites to figure out what I need to be aware of and any new trends that are being spotted. I used to do this on my phone while commuting, and now I work from home, but I still follow this routine, and that got me thinking, why not feed Graylog with this information?

Well… a few hours and a Python script later, I can use Graylog as a one-stop shop to start my day by checking my daily alerts and daily news all in the same system.

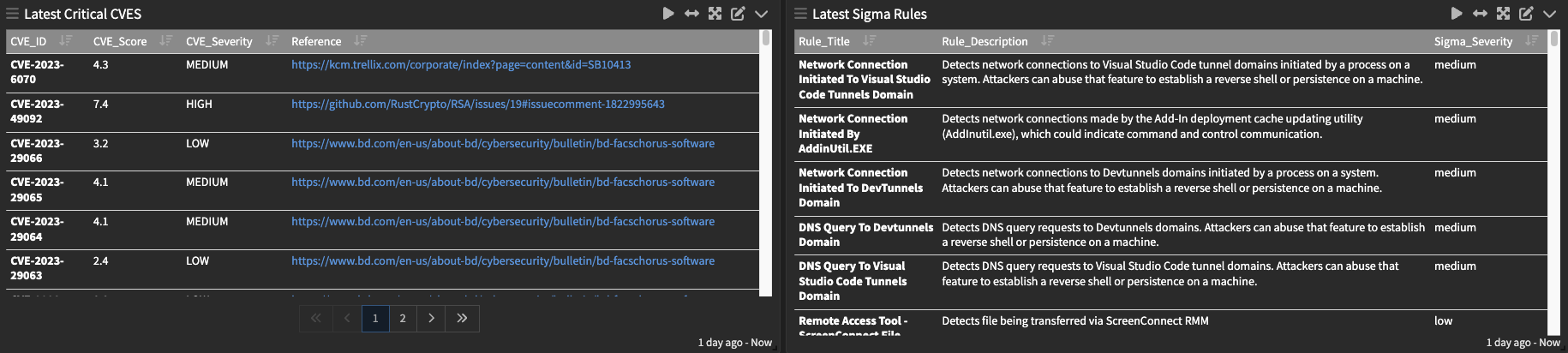

This isn’t a full solution, more like a foundation which can be built on top of over time. I have created a little Python script to scrape RSS feeds from some popular sites, grab the latest CVE information and gather information on the latest Sigma rules. This pairs quite nicely with the Graylog Sigma rule integration as if I see any I think I want to use, I can simply enable them in the Security UI.

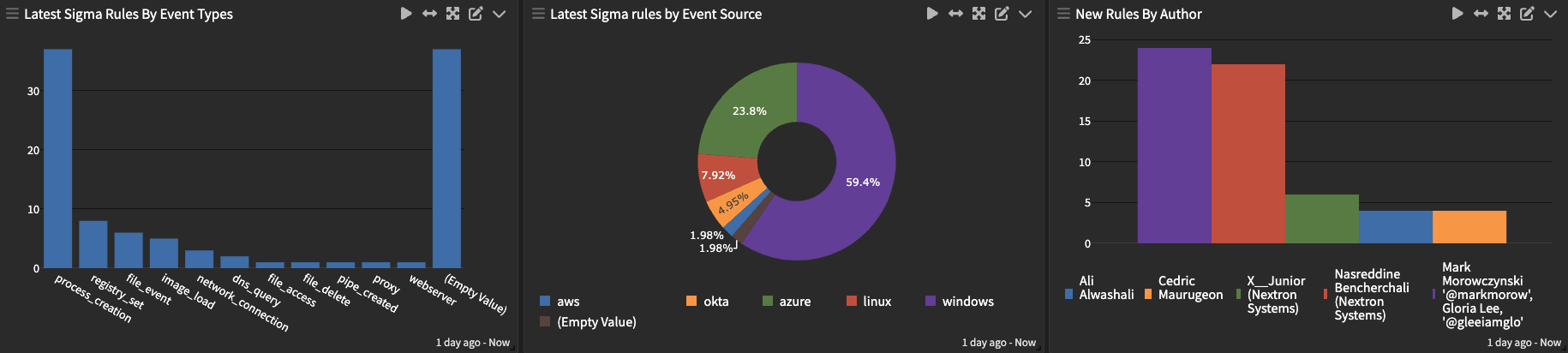

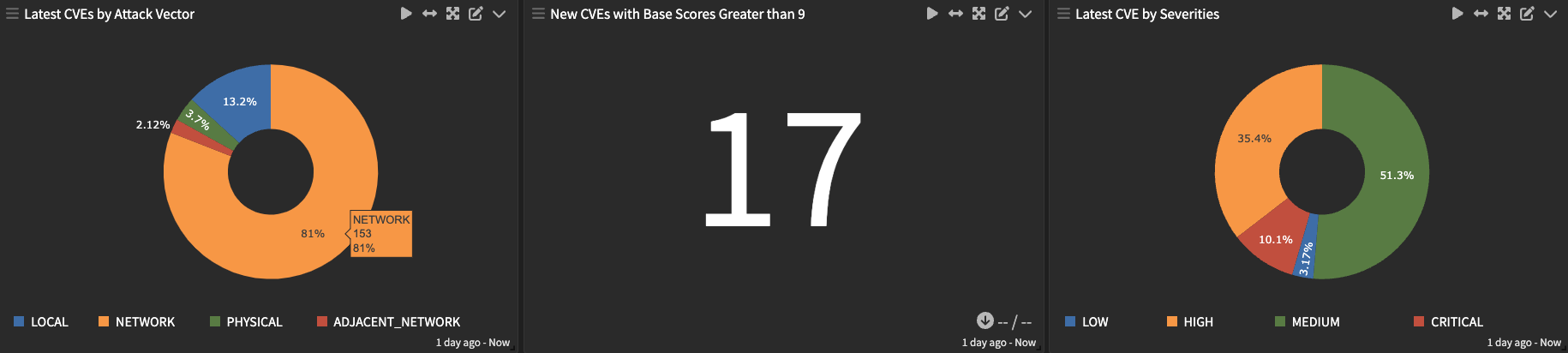

There is more information than just the names and links to the latest, we can also create visualizations of what Sigma rules are based on or break down some key information about what is in the latest published CVEs.

So how does it all work?

It’s pretty straightforward. Basically, we use a simple Python script that does a few things:

- For Sigma rules, it pulls down the latest copy of the sigmaHQ GitHub repo (https://github.com/SigmaHQ/sigma), crawls through the files and populates the event in Graylog.

- For CVE, it leverages the NIST NVD API (https://nvd.nist.gov/developers/products) to pull down the latest CVEs since the last check.

- For new articles, it leverages the really handy feed parser library (https://pypi.org/project/feedparser/) to iterate through an array of feeds and pull back the latest articles.

- For all three, it formats the events into GELF and then sends them to a GELF UDP input.

Using the script is very straightforward, you need only change the below items and let the script do the rest.

# Graylog stuff, must have a running GELF UDP input

graylog_ip = 'yourhost'

graylog_port = 2514

#How many days you want to pull articles for.

days_to_lookback = 30

#Which RSS feeds you want to ingest into Graylog.

feeds = [

'https://www.ncsc.gov.uk/api/1/services/v1/all-rss-feed.xml',

'https://securelist.com/feed/',

'http://feeds.feedburner.com/NakedSecurity',

'https://www.microsoft.com/security/blog/feed/',

'https://www.malwarebytes.com/blog/feed/index.xml',

'https://feeds.feedburner.com/TroyHunt',

'https://blog.knowbe4.com/rss.xml',

'https://blog.talosintelligence.com/feeds/posts/default/-/threats',

'https://krebsonsecurity.com/feed/',

'https://www.theregister.com/security/headlines.atom',

'https://www.darkreading.com/rss.xml'

]

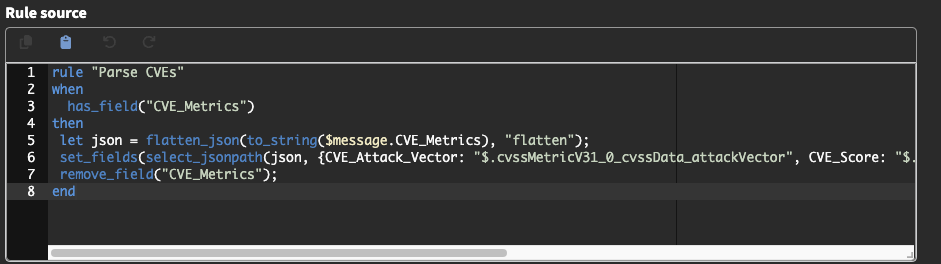

The script doesn’t do all the work, though. We do some things in pipelines within Graylog, and my coding skills aren’t the best, so sometimes manipulating the data in Graylog is a lot easier for instance when we pull back the CVE details, it’s a nested JSON object that I’d prefer to work within a pipeline instead of Python.

I won’t go through each pipeline rule, as it’s included in the pipeline, but for example, if I just want to make the JSON object easier to work with and set some fields, I can do something like the below where we flatten the JSON and then set the fields, and remove the original object.

Where can you get your hands on it?

Everything is on GitHub. This includes the content pack and Python script with some instructions. You can find the link here on GitHub please give me any feedback. I’d love to hear your ideas on where to take this concept next.

In my home environment, I have a cron job to run this script each day at 7 am, so it’s populated for me to wake up and read the previous day’s news.

Conclusion

This is a quick proof-of-concept news feed within Graylog, I may expand on this in the near future. I have some mad hatter ideas on how we can take this further and use CVEs and Sigma rules to automate implementing relevant rules for the SIEM with the new Sigma rule feature that came in 5.0 of Graylog, but, for now, try it out and let us know what you think.

(Kyle Pearson is a Graylog Solution Engineer)