In “Terminator 2“, the T-800 does not win because humans worked harder. It wins because the same machine capability that made it dangerous was reprogrammed to fight for the defenders. Project Glasswing is exactly that.

Claude Mythos Preview is Anthropic’s most powerful AI model and the one they refused to release publicly because it autonomously found thousands of zero-day vulnerabilities across every major operating system and browser. Flaws that decades of expert review never caught. Anthropic’s response was Project Glasswing: a $100 million initiative putting Mythos to work for defenders before adversaries build the same thing.

Most coverage treats this as an enterprise problem. It is not. Speed beats headcount here. A lean team that detects fast, investigates clean, and acts with confidence will outrun a large SOC buried in fragmented tools every time.

That is not a consolation. It is the advantage. Tight workflows, clean data, and the discipline that comes from never affording waste are exactly what AI-assisted detection needs. Teams with that foundation are built for this moment.

What Did Claude Mythos Actually Find?

Claude Mythos Preview is Anthropic’s most capable and currently unreleased AI model. According to Anthropic’s Frontier Red Team, Mythos autonomously identified a 27-year-old flaw in OpenBSD, a 16-year-old vulnerability in FFmpeg that automated tools had probed five million times without catching, and a 17-year-old remote code execution bug in FreeBSD that gives an unauthenticated attacker full root access from anywhere on the internet. No human guided the work after the initial request. Mythos chained four separate vulnerabilities into a single browser exploit that escaped both renderer and operating system sandboxes.

Anthropic did not train Mythos to be an offensive tool. These capabilities emerged as a byproduct of general improvements in code, reasoning, and autonomy. The same improvements that make the model better at patching vulnerabilities make it better at exploiting them, because those are the same underlying skill. Every AI lab moving its general-purpose models forward is on the same trajectory. The proliferation timeline is months, not years.

Project Glasswing is Anthropic’s coordinated defensive response: a $100 million initiative giving curated access to Mythos Preview to 12 major organizations including AWS, Apple, Cisco, CrowdStrike, Google, Microsoft, and NVIDIA, directed at patching critical software before adversaries can weaponize equivalent capabilities. As Fortune reported, Anthropic has privately warned top government officials that Mythos makes large-scale cyberattacks significantly more likely this year.

That coalition coordinates defenses at the global infrastructure level. It does not patch your environment. Over 99 percent of the vulnerabilities Mythos found remained unpatched at the time of the announcement. The defensive work still lands on your team, in your environment, on your timeline.

Why Lean Teams Are Better Positioned Than They Think

The instinct when reading about Claude Mythos is to assume that larger security teams with more resources are better equipped to respond. That assumption is wrong in the ways that matter most.

Large SOC teams carry real operational overhead. A 2026 survey found that SOC teams routinely dismiss up to 30 percent of incoming alerts, not through negligence, but necessity. When context arrives fragmented across disconnected consoles, even skilled analysts triage by instinct rather than evidence. Burnout-driven churn in SOC teams now exceeds 25 percent annually. Scale does not solve those problems. It compounds them.

Lean teams operate differently. When a team of two or three covers an entire environment, there is no room for disconnected tools or investigation workflows that require multiple platforms to complete. That constraint builds operational discipline that is difficult to manufacture at enterprise scale, and it maps directly onto what the post-Glasswing environment rewards. When Claude Mythos can move from vulnerability identification to a working exploit chain without a human in the loop, detection speed matters more than detection breadth. The organizations that respond fastest are the ones with the clearest path from alert to action, not the most seats in their SOC.

Why AI in Security Creates More Work Instead of Less

There is a cycle that plays out in security operations so reliably that most practitioners can describe it without prompting. A vendor ships an AI-powered detection feature. Alert volume climbs 30 to 40 percent. Analysts spend more time dismissing noise than investigating real threats. Six months later the feature is quietly turned off. The next release cycle, the vendor announces a new AI-powered feature.

That cycle has a root cause. Vendors that prioritize autonomy over explainability end up layering human services on top of software analysts cannot trust. For a lean team, that pattern is operationally destructive. A two-person security function that spends three hours a day triaging alerts that lead nowhere has lost the capacity to do anything else.

This is not a technology failure. It is an explainability failure. When an AI model fires an alert on a privileged account authenticating from an unusual location, the analyst needs the exact log events that triggered the alert, the historical baseline for that account, the confidence score, and the anomaly grade. Without that evidence chain, the analyst either trusts a conclusion they cannot verify or dismisses an alert they cannot confidently rule out. Under real operational pressure, most analysts make that choice inconsistently and document neither option. That is not a detection program. It is hope dressed up as infrastructure.

The fix is straightforward: AI has to show its work. Any AI feature in a lean team’s detection stack should pass one test. Can an analyst trace the alert to specific, inspectable log events within two minutes? If not, the feature is producing noise, not capability.

What Human-in-the-Loop Actually Requires

What analysts are signaling through adoption patterns is not resistance to AI. It is demand for AI that works inside existing accountability models, where conclusions are validated through evidence before action is taken. Four things have to be true for that to work.

Every AI-generated alert links directly to the underlying normalized, timestamped log events that produced it. Not a summary, not a score in isolation. The actual events the AI analyzed, accessible so an analyst can verify them independently. Regulatory frameworks from the EU AI Act to the Colorado AI Act increasingly require that high-risk AI-assisted decisions be supported by transparent, inspectable reasoning. For lean teams, this is also just good operations: the quality control mechanism that catches AI mistakes before they become the team’s mistakes.

Anomaly detection shows its baseline. A deviation is only meaningful in context. When anomaly detection exposes the behavioral baseline alongside the alert, an analyst can assess whether something is significant or environmental in seconds, without reconstructing context from memory or separate tools.

Every decision is auditable end to end. What the AI surfaced, what evidence the analyst reviewed, what the analyst decided, and when. For lean teams, that audit trail is the institutional memory that makes the team sharper over time without relying on headcount redundancy to catch gaps.

Automated actions stay bounded. AI-driven responses should only operate within parameters a human has explicitly defined, reviewed, and approved before the capability is enabled. The evidence criteria, action scope, and rollback procedure need to be documented before anything runs in production. For a lean team without the redundancy to absorb a false positive-triggered outage, that documentation is protection, not overhead.

What Your Team Can Do This Week

The right response to Project Glasswing is not waiting for budget approval or a new platform evaluation. It is getting more from the architecture already in place.

Start by auditing what AI tools are already touching your security data, including the unofficial ones. Which analysts are using large language models for threat research? Are those tools connected to internal data? You cannot govern what you cannot see.

Run the two-minute evidence test on your current alert workflow. Pick ten recent alerts and try to trace each one to specific, inspectable log events in under two minutes. Where that fails is your highest-value improvement opportunity, before any new AI investment.

Map your log data gaps before they become investigation gaps. Dropped logs, inconsistent normalization, and retention gaps during outages are more dangerous in a post-Glasswing landscape because the attack chains that exploit them are cheaper to construct than they have ever been.

Graylog: Built for the Teams Doing Real Security Work

Graylog enables lean security teams to operationalize AI in their detection environments without sacrificing transparency, oversight, or control. By embedding AI into observable, evidence-rich workflows, Graylog helps teams detect anomalies, prioritize risk, and surface meaningful insights while maintaining clear visibility into how those insights are generated and acted upon.

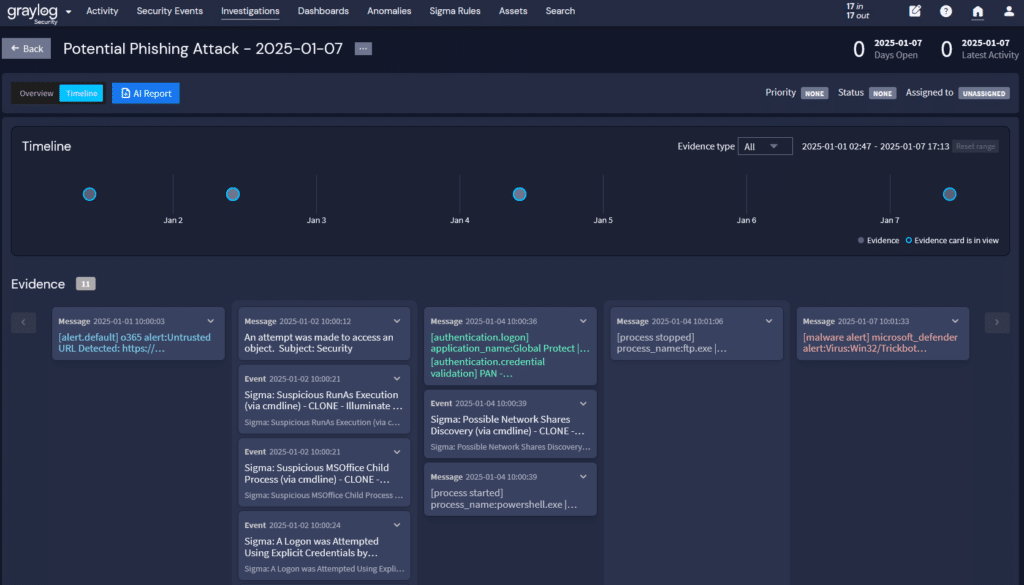

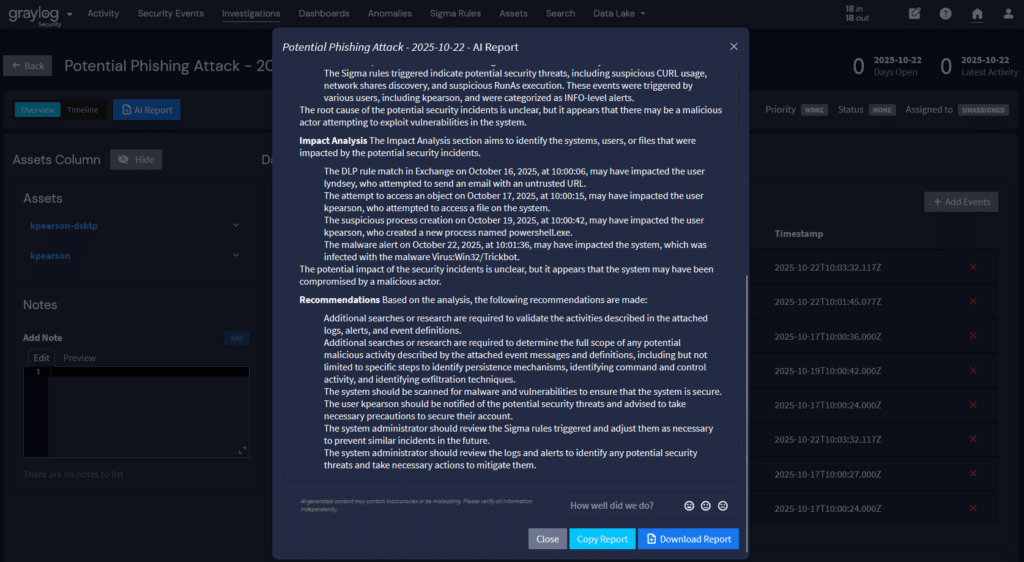

Anomaly detection in Graylog exposes confidence scores, anomaly grades, and behavioral baselines alongside every alert, giving analysts the context to make confident, documentable decisions without chasing evidence across disconnected tools. AI-driven threat prioritization groups related alerts using entity context, asset criticality, and threat campaign intelligence, so your team sees what matters most without wading through what does not. Every AI-generated investigation summary links directly to the underlying log events so analysts can verify conclusions independently. Investigation workflows move from alert to evidence to action inside a single interface, because lean teams cannot afford the time that tool-switching costs.

For lean teams responding to the changed threat economics that Project Glasswing represents, Graylog provides the speed, completeness, and accountability structure that AI-powered threat detection requires. The result is a detection program that a small team can actually run, that scales as the environment grows without scaling the complexity of the workflow, and that produces the evidence chain that makes every security decision defensible when scrutinized by leadership, auditors, regulators, or insurers.

The teams doing the most effective security work right now are not the largest teams. They are the teams with the clearest data, the most trustworthy detection, and the most direct path from alert to confident action. That is the work Graylog is built to support.

To see how Graylog can help you improve your audit readiness, contact us today.