Log management plays an important role in helping to debug Kubernetes clusters, improve their efficiency, and monitor them for any suspicious activity. Kubernetes is an open-source cluster management software designed for the deployment, scaling, and operations of containerized applications. It takes its name from the Greek word for helmsman/pilot, and is pronounced “koo-burr-NET-eez.” Initially developed by Google, it was open-sourced in 2014 and has been maintained by the Cloud Native Computing Foundation since 2015.

HOW DOES LOGGING FOR CONTAINERIZED APPLICATIONS WORK?

A technology that is becoming more and more popular, Kubernetes is nevertheless a specific platform that poses a lot of challenges when it comes to logging. A highly distributed and dynamic system, its intrinsically transient nature – regularly comprised of hundreds of containers that can be restarted and terminated across dozens of different computers – is further complicated by the fact that Kubernetes containers consist of several layers. Each of these produces different types of logs. Here is an overview of Kubernetes clusters’ logging architecture.

Container Logging

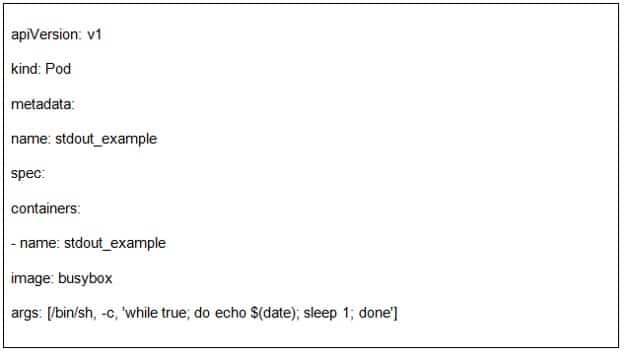

This is the first layer of a Kubernetes cluster, in which containerized apps generate logs. The usual method is to let the application itself output these logs to the standard output (stdout) and standard error (stderr) streams. This is an example of a container log to stdout:

NODE LOGGING

When a containerized application writes to stderr and stdout streams, they are redirected to a logging driver. Usually, these logs will be saved to your host’s var/log/containers directory. When it comes to node logging, it is important to implement log rotation – otherwise, the logs will take up all the storage space on the node.

Graylog has several options on how to deal with this. You can easily set up index rotation and specify when you want your logs to be deleted, closed, or archived. For archival purposes, the logs can be moved to a new location and compressed to further save space.

Cluster Logging

Talking about the cluster itself, there are several of its components that can be logged, as well as other important types of data – such as audit logs and event logs.

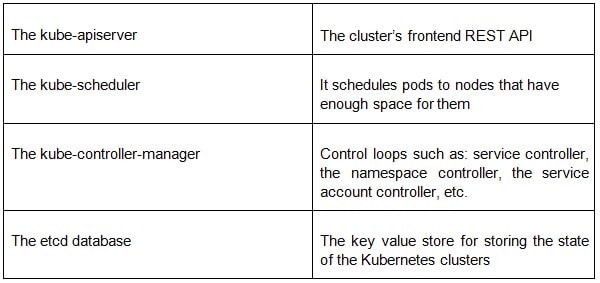

The master nodes consist of four basic services:

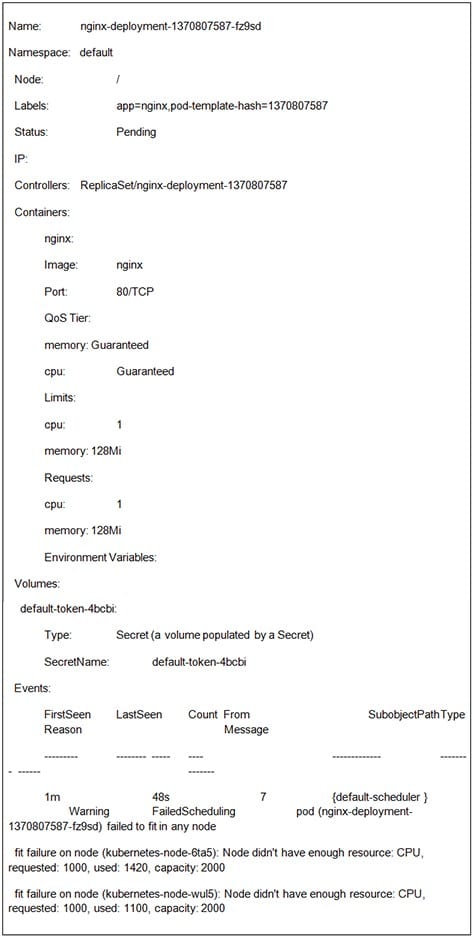

If, for example, we know that a pod (in this case: nginx-deployment-1370807587-fz9sd) failed to schedule, we can look at an event log to see what exactly is the issue with it:

This file has helped us see that the reason why it failed to schedule was that there weren’t enough resources for this pod on any of the available nodes.

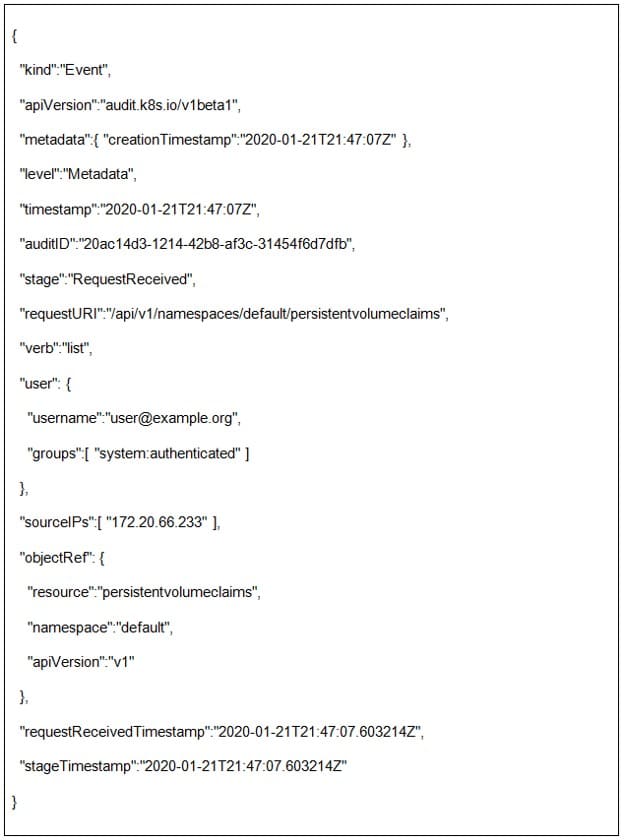

Audit Logs

Audit logs are some of the most crucial log files when it comes to log management. Companies have to be compliant with many regulatory guidelines and industry standards and log management tools help expedite these processes by providing a framework for centralizing these files – choose which data to keep and for how long, benefit from archival and backup services and use advanced search options to help you immediately find whatever you are looking for.

They are used for more than auditing purposes, and also give us answers to who did what on our systems and when. An example of a typical audit policy log from a kube-apiserver request:

Why Is Monitoring Your Logging Architecture Important for Cluster Management?

Kubernetes is made up of many little moving parts, all working in unison towards making it more efficient. But this also makes it trickier to correctly monitor and retain all that data. Proper log retention and log monitoring are one of the must-have features of a quality log management solution, and this is doubly important for a platform such as Kubernetes, whose logs can easily take up a lot of space fast.

This is why scalability by the amount of data received is such a crucial component of log management software.